The ViBe algorithm

In the paper “ViBe: A universal background subtraction algorithm for video sequences“, Olivier Barnich and Marc Van Droogenbroeck introduce several innovative mechanisms for motion detection:

Pixel model and classification process

Pixel model and classification process

Let’s denote by v(x) the value in a given Euclidean color space taken by the pixel located at x in the image, and by vi a background sample value with an index i. Each background pixel x is modeled by a collection of N background sample values

M(x) = {v1, v2, . . . , vN}

taken in previous frames. To classify a pixel value v(x) according to its corresponding model M(x), we compare it to the closest values within the set of samples by defining a sphere SR(v(x)) of radius R centered on v(x). The pixel value v(x) is then classified as background if the cardinality of the set intersection of this sphere and the collection of model samples M(x) is larger than or equal to a given threshold. The classification of a pixel value v(x) involves the computation of N distances between v(x) and model samples, and of N comparison with a thresholded Euclidean distance R.

The accuracy of the ViBe model is determined by two parameters only: the radius R of the sphere and the minimal cardinality. Experiments have shown that a unique radius R of 20 (for monochromatic images) and a cardinality of 2 are appropriate. There is no need to adapt these parameters during the background subtraction nor to change them for different pixel locations.

Background model initialization from a single frame

Many popular techniques described in the literature need a sequence of several dozens of frames to initialize their models; such an approach makes sense from a statistical point of view as it seems necessary to gather a significant amount of data in order to estimate the temporal distribution of the background pixels. But this learning period cannot be allowed by many applications, as it may be necessary to

- segment the foreground of a sequence that is even shorter than the typical initialization sequence required by some background subtraction algorithms

- require the ability to provide an uninterrupted foreground detection, even in the presence of sudden light changes

A more convenient solution is to provide a technique that will initialize the background model from a single frame, so that the response to sudden illumination changes is straightforward: the existing background model is discarded and a new model is initialized instantaneously. What’s more, being able to provide a reliable foreground segmentation as early on as the second frame of a sequence has obvious benefits for short sequences in video-surveillance. Since there is no temporal information in a single frame, ViBe assumes that neighboring pixels share a similar temporal distribution, so it populates the pixel models with values found in the spatial neighborhood of each pixel. The size of the neighborhood needs to be chosen so that it is large enough to comprise a sufficient number of different samples, while keeping in mind that the statistical correlation between values at different locations decreases as the size of the neighborhood increases.

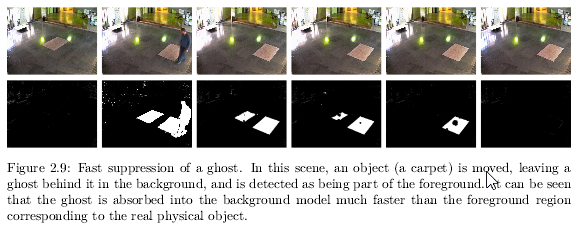

The only drawback is that the presence of a moving object in the first frame will introduce an artifact called a ghost (that is, a set of connected points, detected as in motion but not corresponding to any real moving object). In this particular case, the ghost is caused by the unfortunate initialization of pixel models with samples coming from the moving object. In subsequent frames, the object moves and uncovers the real background, which will be learned progressively through the regular model update process, making the ghost fade over time. ViBe’s update process ensures both a fast model recovery in the presence of a ghost and a slow incorporation of real moving objects into the background model.

Updating the background model over time

The classification step of ViBe compares the current pixel value vt(x) directly to the samples contained in the background model of the previous frame, Mt?1(x) at time t ? 1. But which samples have to be memorized by the model and for how long? The classical approach to the updating of the background history is to discard and replace old values after a number of frames or after a given period of time.

The question of including or not foreground pixel values in the model is one that is always raised for a background subtraction method based on samples; otherwise the model will not adapt to changing conditions. It comes down to a choice between a conservative and a blind update scheme:

- conservative update policy never includes a sample belonging to a foreground region in the background model; unfortunately, it also leads to deadlock situations and everlasting ghosts: a background sample incorrectly classified as foreground prevents its background pixel model from being updated, so it can keep indefinitely the background pixel model from being updated and could cause a permanent misclassification

- blind update is not sensitive to deadlocks: samples are added to the background model whether they have been classified as background or not; the principal drawback of this method is a poor detection of slow moving targets, which are progressively included in the background model.

The ViBe update method incorporates three important components:

- a memoryless update policy, which ensures a smooth decaying lifespan for the samples stored in the background pixel models: instead of systematically removing the oldest sample from the pixel model, ViBe chooses the sample to be discarded randomly according to a uniform probability density function.

- random time subsampling: the random replacement policy allow our pixel model to cover a large (theoretically infinite) time window with a limited number of samples; but in the presence of periodic or pseudo-periodic background motions, the use of fixed subsampling intervals might prevent the background model from properly adapting to these motions. So when a pixel value has been classified as belonging to the background, a random process determines whether this value is used to update the corresponding pixel model.

- a mechanism that propagates background pixel samples spatially to ensure spatial consistency and to allow the adaptation of the background pixel models that are masked by the foreground: ViBe considers that neighboring background pixels share a similar temporal distribution and that a new background sample of a pixel should also update the models of neighboring pixels. According to this policy, background models hidden by the foreground will be updated with background samples from neighboring pixel locations from time to time. This allows a spatial diffusion of information regarding the background evolution that relies on samples classified exclusively as background. ViBe’s background model is thus able to adapt to a changing illumination and to structural evolutions (added or removed background objects) while relying on a strict conservative update scheme.

It is worth analysing the strengths of the ViBe algorithm:

- Faster ghost suppression: ViBe’s spatial update mechanism speeds up the inclusion of ghosts in the background model so that the process is faster than the inclusion of real static foreground objects, because the borders of the foreground objects often exhibit colors that differ noticeably from those of the samples stored in the surrounding background pixel models. When a foreground object stops moving, the information propagation technique updates the pixel models located at its borders with samples coming from surrounding background pixels. But these samples are irrelevant: their colors do not match at all those of the borders of the object, so in subsequent frames, the object remains in the foreground, since background samples cannot diffuse inside the foreground object via its borders. By contrast, a ghost area often shares similar colors with the surrounding background, so when background samples from the area surrounding the ghost try to diffuse inside the ghost, they are likely to match the actual color of the image at the locations where they are diffused; as a result, the ghost is progressively eroded until it disappears entirely.

- Resistance to camera displacements: small displacements are typically due to vibrations or wind and, with many background segmentation techniques, they cause significant numbers of false foreground detections. The spatial consistency of ViBe’s background model brings increased robustness against such small camera movements: since samples are shared between neighboring pixel models, small displacements of the camera introduce very few erroneous foreground detections. ViBe also has the capability of dealing with large displacements of the camera, at the price of a modification of the base algorithm. Since ViBe’s model is purely pixel-based, it can handle moving cameras by allowing pixel models to follow the corresponding physical pixels according to the movements of the camera (the movements of the camera can be estimated either using embedded motion sensors or directly from the video stream using dense optical flow).

- Resilience to noise: two factors must be credited for ViBe